Deep Learning (18-5) - YOLOv5 연기 탐지 모델

YOLO (You Only Look Once)

연기 탐지 모델

YOLOv5 모델 다운로드

In [1]:

%cd /content

!git clone https://github.com/ultralytics/yolov5

%cd yolov5

!pip install -qr requirements.txt

Out [1]:

/content

Cloning into 'yolov5'...

remote: Enumerating objects: 14740, done.

remote: Counting objects: 100% (85/85), done.

remote: Compressing objects: 100% (47/47), done.

remote: Total 14740 (delta 53), reused 66 (delta 38), pack-reused 14655

Receiving objects: 100% (14740/14740), 13.82 MiB | 31.88 MiB/s, done.

Resolving deltas: 100% (10144/10144), done.

/content/yolov5

|████████████████████████████████| 182 kB 31.0 MB/s

|████████████████████████████████| 62 kB 1.5 MB/s

|████████████████████████████████| 1.6 MB 54.8 MB/s

데이터셋 다운로드

- 연기 데이터셋: https://public.roboflow.com/object-detection/wildfire-smoke/

In [2]:

%mkdir /content/yolov5/smoke

%cd /content/yolov5/smoke

!curl -L "https://public.roboflow.com/ds/qtkTj4e2yk?key=EK6FpQl68t" > roboflow.zip; unzip roboflow.zip; rm roboflow.zip

Out [2]:

/content/yolov5/smoke

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 901 100 901 0 0 186 0 0:00:04 0:00:04 --:--:-- 191

100 27.6M 100 27.6M 0 0 5405k 0 0:00:05 0:00:05 --:--:-- 144M

Archive: roboflow.zip

extracting: test/images/ck0rqdyeq4kxa08633xv2wqe1_jpeg.rf.30df6babe258cdc1d5a4e042262665e4.jpg

extracting: test/images/ck0qd918zic840701hnezgpgy_jpeg.rf.30de65aa3639b8a113db71a11bc2726c.jpg

...

extracting: valid/labels/ck0km26n78w6b0944d8vapoyy_jpeg.rf.dca702237c131e91e5ff8aac7758251b.txt

extracting: valid/labels/ck0kksymy5z9j0794x0i3rdvo_jpeg.rf.fd456fe4ab48c81800a19519d6b99ee9.txt

extracting: README.roboflow.txt

extracting: README.dataset.txt

In [3]:

# 파일 목록 불러오기

from glob import glob

train_img_list = glob('/content/yolov5/smoke/train/images/*.jpg')

test_img_list = glob('/content/yolov5/smoke/test/images/*.jpg')

valid_img_list = glob('/content/yolov5/smoke/valid/images/*.jpg')

len(train_img_list), len(test_img_list), len(valid_img_list)

Out [3]:

(516, 74, 147)

In [4]:

# 파일 목록 실제 파일로 저장

with open('/content/yolov5/smoke/train.txt', 'w') as f:

f.write('\n'.join(train_img_list)+'\n')

with open('/content/yolov5/smoke/test.txt', 'w') as f:

f.write('\n'.join(test_img_list)+'\n')

with open('/content/yolov5/smoke/valid.txt', 'w') as f:

f.write('\n'.join(valid_img_list)+'\n')

In [5]:

# yaml 파일 수정을 위한 함수

from IPython.core.magic import register_line_cell_magic

@register_line_cell_magic

def writetemplate(line, cell):

with open(line, 'w') as f:

f.write(cell.format(**globals()))

In [6]:

%cat /content/yolov5/smoke/data.yaml

Out [6]:

train: ../train/images

val: ../valid/images

nc: 1

names: ['smoke']

In [7]:

%%writetemplate /content/yolov5/smoke/data.yaml

train: ../smoke/train/images

test: ../smoke/test/images

val: ../smoke/valid/images

nc: 1

names: ['smoke']

In [8]:

%cat /content/yolov5/smoke/data.yaml

Out [8]:

train: ../smoke/train/images

test: ../smoke/test/images

val: ../smoke/valid/images

nc: 1

names: ['smoke']

모델 구성

In [9]:

import yaml

with open('/content/yolov5/smoke/data.yaml', 'r') as stream:

num_classes = str(yaml.safe_load(stream)['nc']) # 클래스 갯수 불러오기

num_classes

Out [9]:

'1'

In [10]:

# 받아온 모델 정보 확인

%cat /content/yolov5/models/yolov5s.yaml

Out [10]:

# YOLOv5 🚀 by Ultralytics, GPL-3.0 license

# Parameters

nc: 80 # number of classes

depth_multiple: 0.33 # model depth multiple

width_multiple: 0.50 # layer channel multiple

anchors:

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

# YOLOv5 v6.0 backbone

backbone:

# [from, number, module, args]

[[-1, 1, Conv, [64, 6, 2, 2]], # 0-P1/2

[-1, 1, Conv, [128, 3, 2]], # 1-P2/4

[-1, 3, C3, [128]],

[-1, 1, Conv, [256, 3, 2]], # 3-P3/8

[-1, 6, C3, [256]],

[-1, 1, Conv, [512, 3, 2]], # 5-P4/16

[-1, 9, C3, [512]],

[-1, 1, Conv, [1024, 3, 2]], # 7-P5/32

[-1, 3, C3, [1024]],

[-1, 1, SPPF, [1024, 5]], # 9

]

# YOLOv5 v6.0 head

head:

[[-1, 1, Conv, [512, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 6], 1, Concat, [1]], # cat backbone P4

[-1, 3, C3, [512, False]], # 13

[-1, 1, Conv, [256, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 4], 1, Concat, [1]], # cat backbone P3

[-1, 3, C3, [256, False]], # 17 (P3/8-small)

[-1, 1, Conv, [256, 3, 2]],

[[-1, 14], 1, Concat, [1]], # cat head P4

[-1, 3, C3, [512, False]], # 20 (P4/16-medium)

[-1, 1, Conv, [512, 3, 2]],

[[-1, 10], 1, Concat, [1]], # cat head P5

[-1, 3, C3, [1024, False]], # 23 (P5/32-large)

[[17, 20, 23], 1, Detect, [nc, anchors]], # Detect(P3, P4, P5)

]

In [11]:

# 사본 커스텀 저장

%%writetemplate /content/yolov5/models/smoke_yolov5s.yaml

# Parameters

nc: {num_classes} # number of classes

depth_multiple: 0.33 # model depth multiple

width_multiple: 0.50 # layer channel multiple

anchors:

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

# YOLOv5 v6.0 backbone

backbone:

# [from, number, module, args]

[[-1, 1, Conv, [64, 6, 2, 2]], # 0-P1/2

[-1, 1, Conv, [128, 3, 2]], # 1-P2/4

[-1, 3, C3, [128]],

[-1, 1, Conv, [256, 3, 2]], # 3-P3/8

[-1, 6, C3, [256]],

[-1, 1, Conv, [512, 3, 2]], # 5-P4/16

[-1, 9, C3, [512]],

[-1, 1, Conv, [1024, 3, 2]], # 7-P5/32

[-1, 3, C3, [1024]],

[-1, 1, SPPF, [1024, 5]], # 9

]

# YOLOv5 v6.0 head

head:

[[-1, 1, Conv, [512, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 6], 1, Concat, [1]], # cat backbone P4

[-1, 3, C3, [512, False]], # 13

[-1, 1, Conv, [256, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 4], 1, Concat, [1]], # cat backbone P3

[-1, 3, C3, [256, False]], # 17 (P3/8-small)

[-1, 1, Conv, [256, 3, 2]],

[[-1, 14], 1, Concat, [1]], # cat head P4

[-1, 3, C3, [512, False]], # 20 (P4/16-medium)

[-1, 1, Conv, [512, 3, 2]],

[[-1, 10], 1, Concat, [1]], # cat head P5

[-1, 3, C3, [1024, False]], # 23 (P5/32-large)

[[17, 20, 23], 1, Detect, [nc, anchors]], # Detect(P3, P4, P5)

]

In [12]:

# 수정 확인

%cat /content/yolov5/models/smoke_yolov5s.yaml

Out [12]:

# Parameters

nc: 1 # number of classes

depth_multiple: 0.33 # model depth multiple

width_multiple: 0.50 # layer channel multiple

anchors:

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

# YOLOv5 v6.0 backbone

backbone:

# [from, number, module, args]

[[-1, 1, Conv, [64, 6, 2, 2]], # 0-P1/2

[-1, 1, Conv, [128, 3, 2]], # 1-P2/4

[-1, 3, C3, [128]],

[-1, 1, Conv, [256, 3, 2]], # 3-P3/8

[-1, 6, C3, [256]],

[-1, 1, Conv, [512, 3, 2]], # 5-P4/16

[-1, 9, C3, [512]],

[-1, 1, Conv, [1024, 3, 2]], # 7-P5/32

[-1, 3, C3, [1024]],

[-1, 1, SPPF, [1024, 5]], # 9

]

# YOLOv5 v6.0 head

head:

[[-1, 1, Conv, [512, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 6], 1, Concat, [1]], # cat backbone P4

[-1, 3, C3, [512, False]], # 13

[-1, 1, Conv, [256, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 4], 1, Concat, [1]], # cat backbone P3

[-1, 3, C3, [256, False]], # 17 (P3/8-small)

[-1, 1, Conv, [256, 3, 2]],

[[-1, 14], 1, Concat, [1]], # cat head P4

[-1, 3, C3, [512, False]], # 20 (P4/16-medium)

[-1, 1, Conv, [512, 3, 2]],

[[-1, 10], 1, Concat, [1]], # cat head P5

[-1, 3, C3, [1024, False]], # 23 (P5/32-large)

[[17, 20, 23], 1, Detect, [nc, anchors]], # Detect(P3, P4, P5)

]

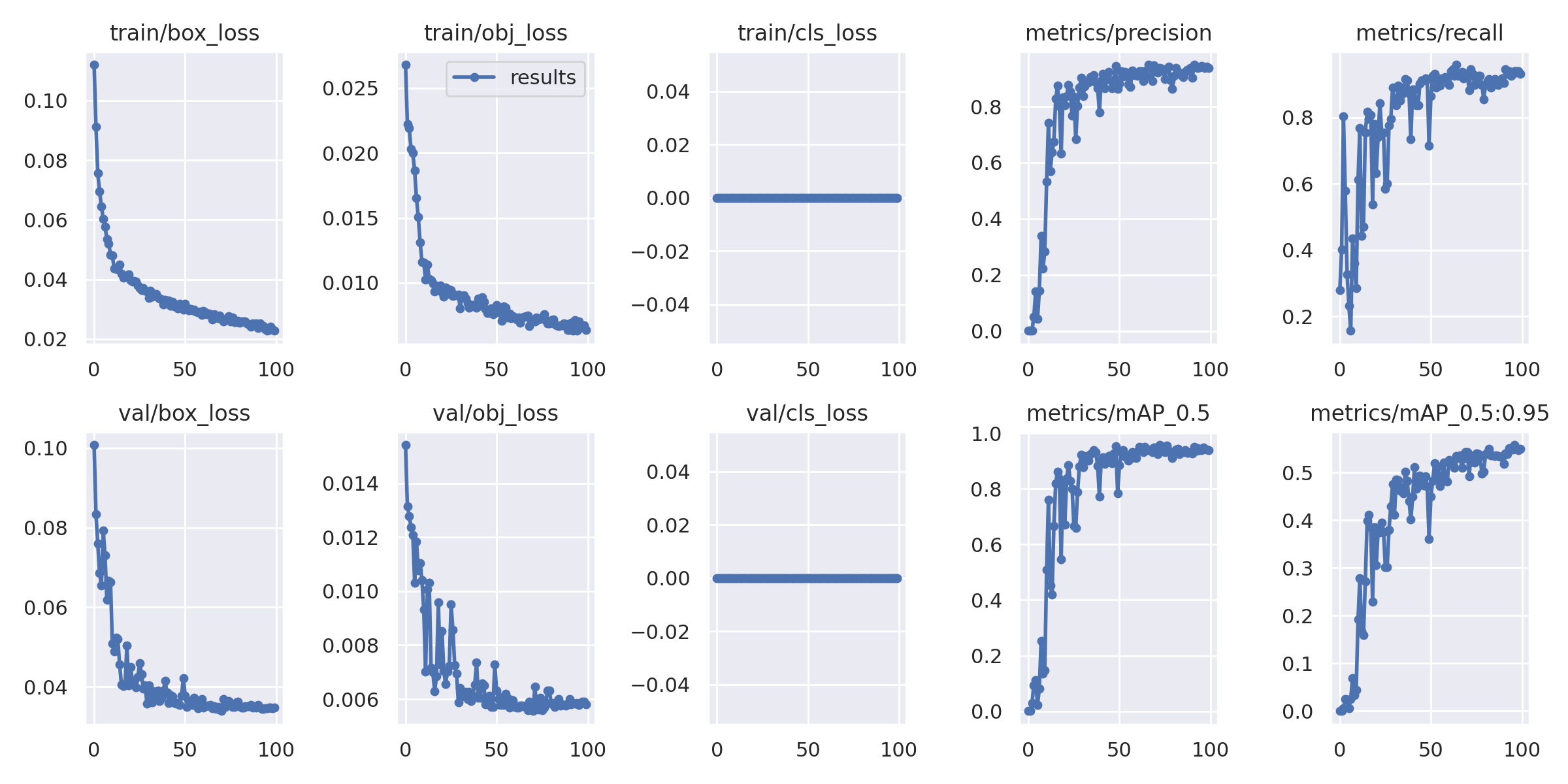

학습(Training)

img: 입력 이미지 크기 정의batch: 배치 크기 결정epochs: 학습 기간 개수 정의data: yaml 파일 경로cfg: 모델 구성 지정weights: 가중치에 대한 경로 지정name: 결과 이름nosave: 최종 체크포인트만 저장cache: 빠른 학습을 위한 이미지 캐시

In [13]:

!pwd

Out [13]:

/content/yolov5/smoke

In [14]:

%cd /content/yolov5/

Out [14]:

/content/yolov5

In [15]:

%%time

!python train.py --img 640 --batch 32 --epochs 100 --data ./smoke/data.yaml \

--cfg ./models/smoke_yolov5s.yaml --name smoke_result --cache

Out [15]:

train: weights=yolov5s.pt, cfg=./models/smoke_yolov5s.yaml, data=./smoke/data.yaml, hyp=data/hyps/hyp.scratch-low.yaml, epochs=100, batch_size=32, imgsz=640, rect=False, resume=False, nosave=False, noval=False, noautoanchor=False, noplots=False, evolve=None, bucket=, cache=ram, image_weights=False, device=, multi_scale=False, single_cls=False, optimizer=SGD, sync_bn=False, workers=8, project=runs/train, name=smoke_result, exist_ok=False, quad=False, cos_lr=False, label_smoothing=0.0, patience=100, freeze=[0], save_period=-1, seed=0, local_rank=-1, entity=None, upload_dataset=False, bbox_interval=-1, artifact_alias=latest

github: up to date with https://github.com/ultralytics/yolov5 ✅

YOLOv5 🚀 v7.0-42-g5545ff3 Python-3.8.16 torch-1.13.0+cu116 CUDA:0 (Tesla T4, 15110MiB)

hyperparameters: lr0=0.01, lrf=0.01, momentum=0.937, weight_decay=0.0005, warmup_epochs=3.0, warmup_momentum=0.8, warmup_bias_lr=0.1, box=0.05, cls=0.5, cls_pw=1.0, obj=1.0, obj_pw=1.0, iou_t=0.2, anchor_t=4.0, fl_gamma=0.0, hsv_h=0.015, hsv_s=0.7, hsv_v=0.4, degrees=0.0, translate=0.1, scale=0.5, shear=0.0, perspective=0.0, flipud=0.0, fliplr=0.5, mosaic=1.0, mixup=0.0, copy_paste=0.0

ClearML: run 'pip install clearml' to automatically track, visualize and remotely train YOLOv5 🚀 in ClearML

Comet: mrun 'pip install comet_ml' to automatically track and visualize YOLOv5 🚀 runs in Comet

TensorBoard: Start with 'tensorboard --logdir runs/train', view at http://localhost:6006/

Downloading https://ultralytics.com/assets/Arial.ttf to /root/.config/Ultralytics/Arial.ttf...

100% 755k/755k [00:00<00:00, 38.4MB/s]

Downloading https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5s.pt to yolov5s.pt...

100% 14.1M/14.1M [00:00<00:00, 305MB/s]

from n params module arguments

0 -1 1 3520 models.common.Conv [3, 32, 6, 2, 2]

1 -1 1 18560 models.common.Conv [32, 64, 3, 2]

2 -1 1 18816 models.common.C3 [64, 64, 1]

3 -1 1 73984 models.common.Conv [64, 128, 3, 2]

4 -1 2 115712 models.common.C3 [128, 128, 2]

5 -1 1 295424 models.common.Conv [128, 256, 3, 2]

6 -1 3 625152 models.common.C3 [256, 256, 3]

7 -1 1 1180672 models.common.Conv [256, 512, 3, 2]

8 -1 1 1182720 models.common.C3 [512, 512, 1]

9 -1 1 656896 models.common.SPPF [512, 512, 5]

10 -1 1 131584 models.common.Conv [512, 256, 1, 1]

11 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

12 [-1, 6] 1 0 models.common.Concat [1]

13 -1 1 361984 models.common.C3 [512, 256, 1, False]

14 -1 1 33024 models.common.Conv [256, 128, 1, 1]

15 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

16 [-1, 4] 1 0 models.common.Concat [1]

17 -1 1 90880 models.common.C3 [256, 128, 1, False]

18 -1 1 147712 models.common.Conv [128, 128, 3, 2]

19 [-1, 14] 1 0 models.common.Concat [1]

20 -1 1 296448 models.common.C3 [256, 256, 1, False]

21 -1 1 590336 models.common.Conv [256, 256, 3, 2]

22 [-1, 10] 1 0 models.common.Concat [1]

23 -1 1 1182720 models.common.C3 [512, 512, 1, False]

24 [17, 20, 23] 1 16182 models.yolo.Detect [1, [[10, 13, 16, 30, 33, 23], [30, 61, 62, 45, 59, 119], [116, 90, 156, 198, 373, 326]], [128, 256, 512]]

smoke_YOLOv5s summary: 214 layers, 7022326 parameters, 7022326 gradients, 15.9 GFLOPs

Transferred 342/349 items from yolov5s.pt

AMP: checks passed ✅

optimizer: SGD(lr=0.01) with parameter groups 57 weight(decay=0.0), 60 weight(decay=0.0005), 60 bias

albumentations: Blur(p=0.01, blur_limit=(3, 7)), MedianBlur(p=0.01, blur_limit=(3, 7)), ToGray(p=0.01), CLAHE(p=0.01, clip_limit=(1, 4.0), tile_grid_size=(8, 8))

train: Scanning /content/yolov5/smoke/train/labels... 516 images, 0 backgrounds, 0 corrupt: 100% 516/516 [00:00<00:00, 2159.74it/s]

train: New cache created: /content/yolov5/smoke/train/labels.cache

train: Caching images (0.4GB ram): 100% 516/516 [00:01<00:00, 263.13it/s]

val: Scanning /content/yolov5/smoke/valid/labels... 147 images, 0 backgrounds, 0 corrupt: 100% 147/147 [00:00<00:00, 541.68it/s]

val: New cache created: /content/yolov5/smoke/valid/labels.cache

val: Caching images (0.1GB ram): 100% 147/147 [00:01<00:00, 105.44it/s]

AutoAnchor: 4.72 anchors/target, 1.000 Best Possible Recall (BPR). Current anchors are a good fit to dataset ✅

Plotting labels to runs/train/smoke_result/labels.jpg...

Image sizes 640 train, 640 val

Using 2 dataloader workers

Logging results to runs/train/smoke_result

Starting training for 100 epochs...

Epoch GPU_mem box_loss obj_loss cls_loss Instances Size

0/99 7.32G 0.112 0.02681 0 5 640: 100% 17/17 [00:09<00:00, 1.73it/s]

Class Images Instances P R mAP50 mAP50-95: 100% 3/3 [00:03<00:00, 1.18s/it]

all 147 147 0.00093 0.279 0.00127 0.000343

...

Epoch GPU_mem box_loss obj_loss cls_loss Instances Size

99/99 8.34G 0.02281 0.006368 0 5 640: 100% 17/17 [00:06<00:00, 2.59it/s]

Class Images Instances P R mAP50 mAP50-95: 100% 3/3 [00:01<00:00, 2.66it/s]

all 147 147 0.937 0.932 0.94 0.55

100 epochs completed in 0.229 hours.

Optimizer stripped from runs/train/smoke_result/weights/last.pt, 14.4MB

Optimizer stripped from runs/train/smoke_result/weights/best.pt, 14.4MB

Validating runs/train/smoke_result/weights/best.pt...

Fusing layers...

smoke_YOLOv5s summary: 157 layers, 7012822 parameters, 0 gradients, 15.8 GFLOPs

Class Images Instances P R mAP50 mAP50-95: 100% 3/3 [00:02<00:00, 1.30it/s]

all 147 147 0.943 0.939 0.949 0.559

Results saved to runs/train/smoke_result

CPU times: user 8.88 s, sys: 949 ms, total: 9.83 s

Wall time: 14min 28s

In [16]:

%load_ext tensorboard

%tensorboard --logdir runs

Out [16]:

<IPython.core.display.Javascript object>

In [17]:

!ls /content/yolov5/runs/train/smoke_result

Out [17]:

confusion_matrix.png results.png

events.out.tfevents.1671509480.a93f7837e3e7.5947.0 train_batch0.jpg

F1_curve.png train_batch1.jpg

hyp.yaml train_batch2.jpg

labels_correlogram.jpg val_batch0_labels.jpg

labels.jpg val_batch0_pred.jpg

opt.yaml val_batch1_labels.jpg

P_curve.png val_batch1_pred.jpg

PR_curve.png val_batch2_labels.jpg

R_curve.png val_batch2_pred.jpg

results.csv weights

In [18]:

from IPython.display import Image

Image(filename='/content/yolov5/runs/train/smoke_result/results.png', width=800)

Out [18]:

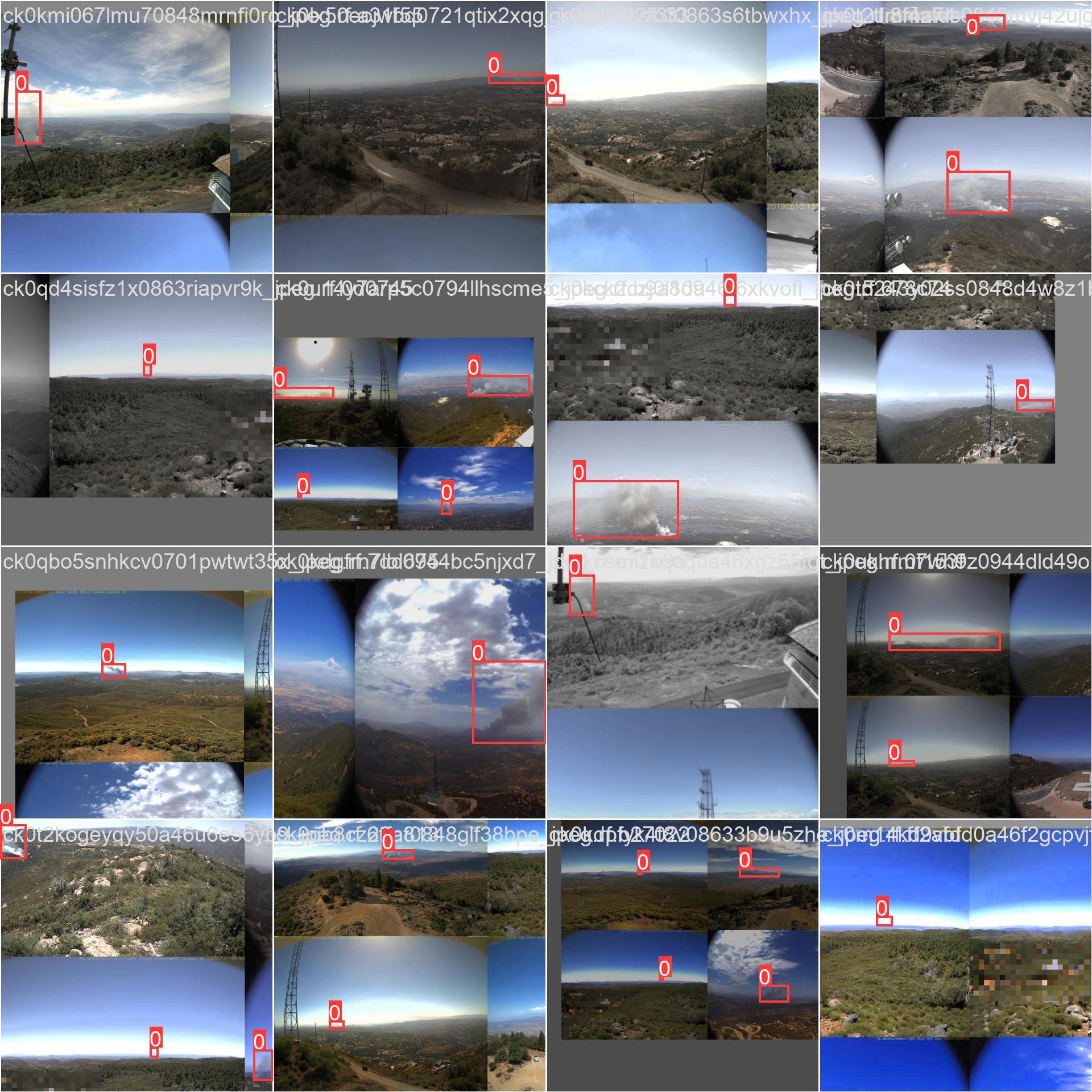

In [19]:

Image(filename='/content/yolov5/runs/train/smoke_result/train_batch0.jpg', width=800)

Out [19]:

In [20]:

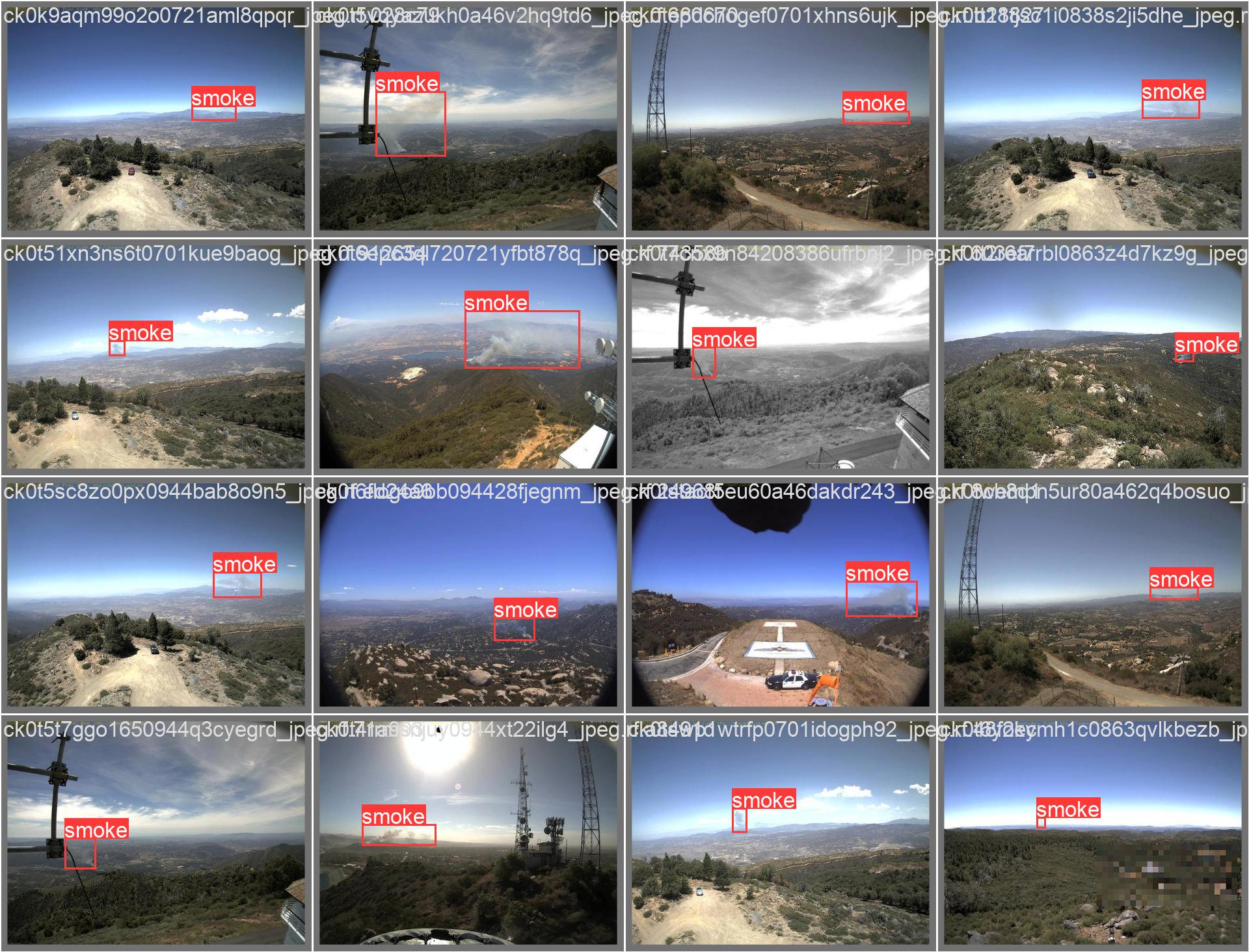

Image(filename='/content/yolov5/runs/train/smoke_result/val_batch0_labels.jpg', width=800)

Out [20]:

검증(Validation)

In [21]:

# validation data

!python val.py --weights runs/train/smoke_result/weights/best.pt \

--data ./smoke/data.yaml --img 640 --iou 0.65 --half

Out [21]:

val: data=./smoke/data.yaml, weights=['runs/train/smoke_result/weights/best.pt'], batch_size=32, imgsz=640, conf_thres=0.001, iou_thres=0.65, max_det=300, task=val, device=, workers=8, single_cls=False, augment=False, verbose=False, save_txt=False, save_hybrid=False, save_conf=False, save_json=False, project=runs/val, name=exp, exist_ok=False, half=True, dnn=False

YOLOv5 🚀 v7.0-42-g5545ff3 Python-3.8.16 torch-1.13.0+cu116 CUDA:0 (Tesla T4, 15110MiB)

Fusing layers...

smoke_YOLOv5s summary: 157 layers, 7012822 parameters, 0 gradients, 15.8 GFLOPs

val: Scanning /content/yolov5/smoke/valid/labels.cache... 147 images, 0 backgrounds, 0 corrupt: 100% 147/147 [00:00<?, ?it/s]

Class Images Instances P R mAP50 mAP50-95: 100% 5/5 [00:03<00:00, 1.37it/s]

all 147 147 0.944 0.939 0.948 0.557

Speed: 0.3ms pre-process, 4.0ms inference, 2.0ms NMS per image at shape (32, 3, 640, 640)

Results saved to runs/val/exp

In [22]:

# test data

!python val.py --weights runs/train/smoke_result/weights/best.pt \

--data ./smoke/data.yaml --img 640 --task test

Out [22]:

val: data=./smoke/data.yaml, weights=['runs/train/smoke_result/weights/best.pt'], batch_size=32, imgsz=640, conf_thres=0.001, iou_thres=0.6, max_det=300, task=test, device=, workers=8, single_cls=False, augment=False, verbose=False, save_txt=False, save_hybrid=False, save_conf=False, save_json=False, project=runs/val, name=exp, exist_ok=False, half=False, dnn=False

YOLOv5 🚀 v7.0-42-g5545ff3 Python-3.8.16 torch-1.13.0+cu116 CUDA:0 (Tesla T4, 15110MiB)

Fusing layers...

smoke_YOLOv5s summary: 157 layers, 7012822 parameters, 0 gradients, 15.8 GFLOPs

test: Scanning /content/yolov5/smoke/test/labels... 74 images, 0 backgrounds, 0 corrupt: 100% 74/74 [00:00<00:00, 424.05it/s]

test: New cache created: /content/yolov5/smoke/test/labels.cache

Class Images Instances P R mAP50 mAP50-95: 100% 3/3 [00:02<00:00, 1.45it/s]

all 74 74 0.852 0.932 0.939 0.547

Speed: 0.3ms pre-process, 6.8ms inference, 2.0ms NMS per image at shape (32, 3, 640, 640)

Results saved to runs/val/exp2

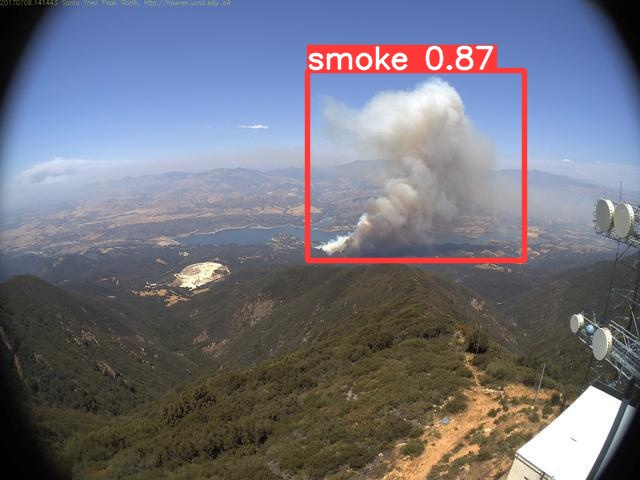

추론(Inference)

In [23]:

%ls runs/train/smoke_result/weights/

Out [23]:

best.pt last.pt

In [24]:

!python detect.py --weights runs/train/smoke_result/weights/best.pt \

--img 640 --conf 0.4 --source ./smoke/test/images

Out [24]:

detect: weights=['runs/train/smoke_result/weights/best.pt'], source=./smoke/test/images, data=data/coco128.yaml, imgsz=[640, 640], conf_thres=0.4, iou_thres=0.45, max_det=1000, device=, view_img=False, save_txt=False, save_conf=False, save_crop=False, nosave=False, classes=None, agnostic_nms=False, augment=False, visualize=False, update=False, project=runs/detect, name=exp, exist_ok=False, line_thickness=3, hide_labels=False, hide_conf=False, half=False, dnn=False, vid_stride=1

YOLOv5 🚀 v7.0-42-g5545ff3 Python-3.8.16 torch-1.13.0+cu116 CUDA:0 (Tesla T4, 15110MiB)

Fusing layers...

smoke_YOLOv5s summary: 157 layers, 7012822 parameters, 0 gradients, 15.8 GFLOPs

image 1/74 /content/yolov5/smoke/test/images/ck0kcoc8ik6ni0848clxs0vif_jpeg.rf.8b4629777ffe1d349cc970ee8af59eac.jpg: 480x640 1 smoke, 11.7ms

image 2/74 /content/yolov5/smoke/test/images/ck0kd4afx8g470701watkwxut_jpeg.rf.bb5a1f2c2b04be20c948fd3c5cec33ff.jpg: 480x640 1 smoke, 10.0ms

...

image 73/74 /content/yolov5/smoke/test/images/ck0ujmglz85u10a468qt0d4fc_jpeg.rf.18d02755e8c96c330cbe6169073b14ce.jpg: 480x640 1 smoke, 7.7ms

image 74/74 /content/yolov5/smoke/test/images/ck0ujw76nui2g0863hz7l7uqo_jpeg.rf.f6012893d20f44f903489d57c35ca95d.jpg: 480x640 1 smoke, 8.0ms

Speed: 0.4ms pre-process, 8.7ms inference, 0.8ms NMS per image at shape (1, 3, 640, 640)

Results saved to runs/detect/exp

In [25]:

import random

image_name = random.choice(glob('runs/detect/exp/*.jpg'))

display(Image(filename=image_name))

Out [25]:

모델 내보내기

In [26]:

from google.colab import drive

drive.mount('/content/drive')

Out [26]:

Mounted at /content/drive

In [27]:

%cp /content/yolov5/runs/train/smoke_result/weights/best.pt '/content/drive/MyDrive/Colab Notebooks/deep_learning/model/18_yolov5_smoke'

Reference

- 이 포스트는 SeSAC 인공지능 자연어처리, 컴퓨터비전 기술을 활용한 응용 SW 개발자 양성 과정 - 심선조 강사님의 강의를 정리한 내용입니다.

댓글남기기